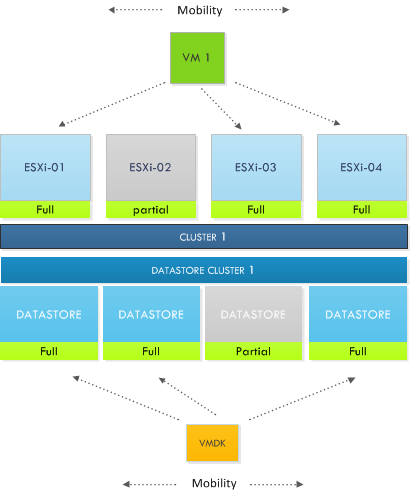

The first article in the series about architecture and design decisions series focuses on the connectivity of the datastores within the datastore cluster. Connectivity between ESXi hosts and datastores in the datastore cluster affects initial placement and load balancing decisions made by DRS and Storage DRS. Although connecting a datastore to all ESXi hosts inside a cluster is a common practice, we still come across partially connected datastores in virtual environments.

What is the impact of a partially connected datastore, member of a datastore cluster, connected to a DRS cluster? What interoperability problems can you expect and what is the impact of this design on DRS load balancing operations and SDRS load balancing operations?

Let’s start with the basic terminology.

Fully connected datastore clusters

A fully connected datastore cluster is when the storage is attached to all ESX servers in a cluster. This is a recommendation, but it is not enforced.

Partially connected datastore clusters

If a datastore is connected to a subset of ESXi hosts inside the DRS cluster, the datastore cluster is treated as a partially connected datastore cluster.

Now what happens if the DRS cluster is connected to partially connected datastores? It’s important to understand that the goal of both DRS and SDRS is resource availability, key to offering resource availability is to provide or have as much as mobility as possible. SDRS will not generate any migration recommendations that will reduce the compatibility of a virtual machine regarding datastore connections. Virtual machine to host compatibility are captured in compatibility lists.

Compatibility list

Inside the cluster a vm-host compatibility list is generated for each virtual machine. The compatibility list determines which ESXi host in the cluster have network and storage configurations that allow the virtual machine to successfully come online. Membership of a Mandatory VM to host affinity rules are also listed in the compatibility list.If the network portgroup or datastore is not available on the host, or the host is not listed in the host group of the mandatory affinity rule, the ESXi server is deemed incompatible to host that virtual machine.

As mentioned, both DRS and SDRS focus on resource availability and resource outage avoidance, therefore SDRS prefers a datastore that is connected to all hosts rather than selecting a datastore that is partially connected. Connecting datastores to a subset of hosts reduce the compatibility list impacting the mobility of the virtual machine reducing the efficiency of DRS and SDRS.

Finding a suitable location or the ability to load balance becomes more challenging when the cluster and datastore cluster are partially connected. During initial placement a selection of a datastore may impact the mobility of the virtual machine amongst the hosts, while selecting a host impacts the mobility of a virtual machines amongst the datastores in the datastore cluster.

Let’s explore this impact a little bit further. During the process of migration recommendations, DRS selects a host for a virtual machine that can provide enough resources to satisfy the virtual machines resource entitlement, while lowering the imbalance of the cluster. DRS might come across a low utilized host; other hosts inside the cluster are highly utilized. Unfortunately the lightly utilized host is not connected to the datastore containing the virtual machine files (it might even be lowly utilized due to the poor connection state) and therefore DRS will not consider the host due to the incompatibility. While from a DRS resource load balancing perspective this host might be very attractive option to solve resource imbalance. Also keep in mind the impact of this behavior on VM-Host affinity rules, DRS will not migrate the virtual machine to the partially connected host inside the host group.

Similar happens with SDRS load balancing. Partially connected datastores are not recommended when fully connected datastores are available that do not violate the space SDRS threshold. You might wonder why the space SDRS threshold is explicitly mentioned and not the IO load balanced but that’s because IO load balancing is disabled when a partially connected datastore is detected in the datastore cluster.

IO load balancing

It is important to understand the impact a single partially connected datastore has on the service level of an entire datastore cluster. As SDRS detects a partially connected datastore it will disable the IO load balancing on the entire datastore cluster. Not only on that single partially connected datastore, but the entire cluster. Effectively degrading a complete feature set of your virtual infrastructure.

Temporary partially connectivity – a real threat?

The connectivity status is important when the SDRS interval expires; during the migration recommendation calculation is checks the connectivity. A temporary all-paths-down status or a rezoning procedure might not have effect on SDRS load-balancing behavior, but what if good old murphy decides to give you a visit during the invocation period? Keep this behavior in mind when scheduling maintenance on the storage platform.

Warning messages

SDRS generates a warning and displays it at the SDRS faults tab in the datastores and datastore cluster view

Benefits of partially connected

We cannot identify any direct benefit of partially connecting a datastore of a cluster. Partially connected datastores impact initial placement, disable IO load-balancing and will affect DRS load balancing as well as SDRS space balancing. Therefore a basic design decision would be connect all datastores to all host in the cluster connected to the datastore cluster. If anyone has got a good reason for not connecting a datastore to all the hosts, please leave a comment.

Re temporary partial connectivity: The SDRS interval is whatever value is specified for the “Evaluate I/O load every” advanced option for SDRS configuration. (Sorry, I can’t find the default value for that. Does it happen to be the same 5 minutes that’s used for regular DRS?) When that interval has elapsed, SDRS migration recommendation calculation is invoked. If, during that invocation, at least one datastore has partial connectivity, then the migration recommendation will disable I/O load balancing, and the migration recommendation calculation will consider only space utilization. Correct?

What happens if full connectivity is restored before the next SDRS invocation? Will the next SDRS calculation consider I/O load balancing? I’m guessing yes it will. Or does I/O load balancing get indefinitely (permanently) disabled just because one SDRS invocation happened while there was one datastore partially connected? And if one incident turns off I/O load balancing indefinitely, how do you re-enable it?

Re valid reasons for designing partial datastore connectivity in a cluster: I can’t think of any good cases.

Playing devil’s advocate, maybe do that if you were approaching the 1024 paths/host configuration maximum (vSphere 4.x and 5.0) and you could get around that by saying some datastores are visible to only the even-numbered hosts and others to only the odd-numbered hosts, etc.. But in that case you’d still likely just split the hosts and datastores into multiple fully-connected clusters, since you couldn’t move VMs around without sVmotion or cold migration anyway then why have them be in one cluster? Maybe if some datastores were holding .ISO files or other things that should be visible to the hosts but aren’t integral to the VM (like .vmdk and .vmx and .vswp files are)? Or, maybe if it’s a small cluster and you want to reserve spare capacity for a host failure (like a 4-host cluster able to tolerate one host failure and running at <= 67% average, vs. two 2-host clusters each able to tolerate one host failure, running at <= 50% average). In such a small cluster, you'd have to have a lot of datastores needed per VM, like maybe if they needed many pRDMs for an Exchange deployment (say 20 LUNs for logs + DB per VM) that uses SAN-aware software in the VMs for backup quiescieng, etc. (thus requiring pRDM). This seems unlikely.

Certainly I agree that "all hosts in a cluster should see all the same datastores" is a good guideline for the vast majority of cases.