Lately I have been receiving questions on best practices and considerations for aligning the Storage DRS latency and Storage IO Control (SIOC) latency, how they are correlated and how to configure them to work optimally together. Let’s start with identifying the purpose of each setting, review the enhancements vSphere 5.1 has introduced and discover the impact when misaligning both thresholds in vSphere 5.0.

Purpose of the SIOC threshold

The main goal of the SIOC latency is to give fair access to the datastores, throttling virtual machine outstanding I/O to the datastores across multiple hosts to keep the measured latency below the threshold.

It can have a restrictive effect on the I/O flow of virtual machines.

Purpose of the Storage DRS latency threshold

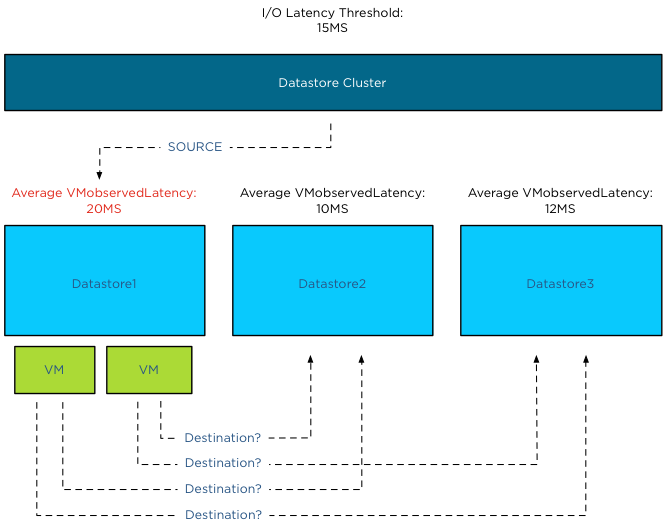

The Storage DRS latency is a threshold to trigger virtual machine migrations. To be more precise, if the average latency (VMObservedLatency) of the virtual machines on a particular datastore is higher than the Storage DRS threshold, then Storage DRS will mark that datastore as “source” for load balancing migrations. In other words, that datastore provide the candidates (virtual machines) for Storage DRS to move around to solve the imbalance of the datastore cluster.

This means that the Storage DRS threshold metric has no “restrictive” access limitations. It does not limit the ability of the virtual machines to send I/O to the datastore. It is just an indicator for Storage DRS which datastore to pick for load balance operations.

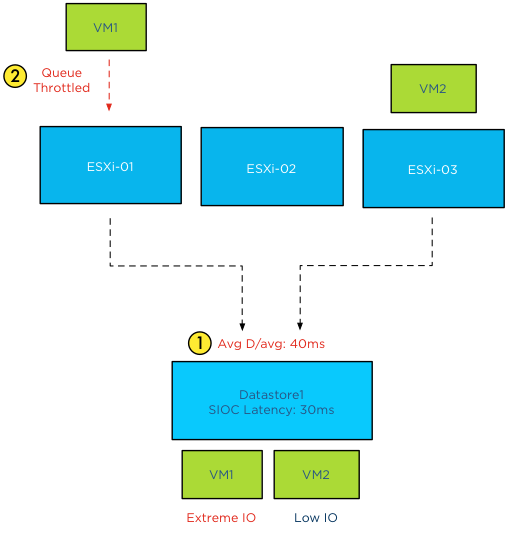

SIOC throttling behavior

When the average device latency detected by SIOC is above the threshold, SIOC throttles the outstanding IO of the virtual machines on the hosts connected to that datastore. However due to different number of shares, various IO sizes, random versus sequential workload and the spatial locality of the changed blocks on the array, we are almost certain that no virtual machine will experience the same performance. Some virtual machines will experience a higher latency than other virtual machines running on that datastore. Remember SIOC is driven by shares, not reservations, we cannot guarantee IO slots (reservations). Long story short, when the datastore is experiencing latency, the VMkernel manages the outbound queue, resulting in creating a buildup of I/O somewhere higher up in the stack. As the SIOC latency threshold is the weighted average of D/AVG per host, the weight is the number of IOPS on that host. For more information how SIOC calculates the Device average latency, please read the article: “To which host-level latency statistic is the SIOC congestion threshold related?”

Has SIOC throttling any effect on Storage DRS load balancing?

Depending on which vSphere version you run is the key whether SIOC throttling has impact on Storage DRS load balancing. As stated in the previous paragraph, if SIOC throttles the queues, the virtual machine I/O does not disappear, vSphere always allows the virtual machine to generate I/O to the datastore, it just builds up somewhere higher in the stack between the virtual machine and the HBA queue.

In vSphere 5.0, Storage DRS measures latency by averaging the device latency of the hosts running VMs on that datastore. This is almost the same metric as the SIOC latency. This means that when you set the SIOC latency equal to the Storage DRS latency, the latency will be build up in the stack above the Storage DRS measure point. This means that in worst-case scenario, SIOC throttles the I/O, keeping it above the measure point of Storage DRS, which in turn makes the latency invisible to Storage DRS and therefore does not trigger the load balance operation for that datastore.

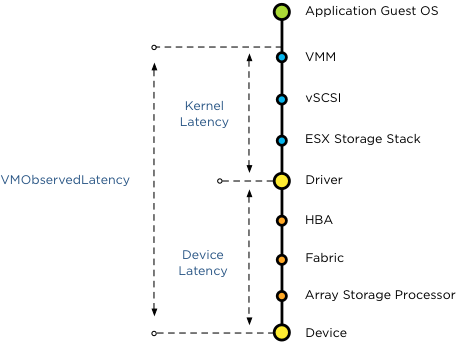

Introducing vSphere 5.1 VMObservedLatency

To avoid this scenario Storage DRS in vSphere 5.1 is using the metric VMObservedLatency. This metric measures the round-trip of I/O from the moment the VMkernel receives the I/O (Virtual Machine Monitor) to the datastore and all the way back to the VMM.

This means that when you set the SIOC latency to a lower threshold than the Storage DRS latency, Storage DRS still observes the latency build up in the kernel layer.

vSphere 5.1 Automatic latency SIOC

To help you avoid building up I/O in the host queue, vSphere 5.1 offers automatic threshold computation for SIOC. SIOC sets the latency to 90% of the throughput level of the device. To determine this, SIOC derives a latency setting after a series of tests, mapping maximum throughput to a latency value. During the tests SIOC detects where the throughput of I/O levels out, while the latency keeps on increasing. To be conservative, SIOC derives a latency value that allows the host to generate up to 90% of the throughput, leaving a burst space of 10%. This provides the best performance of the devices, avoiding unnecessary restrictions by building up latency in the queues. In my opinion, this feature alone warrants the upgrade to vSphere 5.1.

How to set the two thresholds to work optimally together

SIOC in vSphere 5.1 allows the host to go up to 90% of the throughput before adjusting the queue length of each host, and generate queuing in the kernel instead of queuing on the storage array. As Storage DRS uses VMObservedLatency it monitors the complete stack. It observes the overall latency, disregarding the location of the latency in the stack and tries to move VMs to other datastores to level out the overall experienced latency in the datastore cluster. Therefore you do not need to worry about misaligning the SIOC latency and the Storage DRS I/O latency.

If you are running vSphere 5.0 it’s recommended setting the SIOC threshold to a higher value than the Storage DRS I/O latency threshold. Please refer to your storage vendor to receive the accurate SIOC latency threshold.

Get notification of these blogs postings and more DRS and Storage DRS information by following me on Twitter: @frankdenneman

vSphere 5.1 Storage DRS load balancing and SIOC threshold enhancements

3 min read

This is certainly the first time I have come across such an explanation. You said above “…vSphere 5.1 offers automatic threshold computation for SIOC. SIOC sets the latency to 90% of the throughput level of the device.” I have a 5.1 environment and when I enable SIOC on my Datastores, it has a “Congestion Threshold” which defaults to 30ms. There is no other option for “automatic”. I appreciate your reply – thanks.

Ali,

This feature is available via the vSphere web client, not the vSphere client

Thanks for the clarification Frank.

This is great blog.