During my Storage DRS presentation at VMworld I talked about datastore cluster architecture and covered the impact of partially connected datastore clusters. In short – when a datastore in a datastore cluster is not connected to all hosts of the connected DRS cluster, the datastore cluster is considered partially connected. This situation can occur when not all hosts are configured identically, or when new ESXi hosts are added to the DRS cluster.

The problem

I/O load balancing does not support partially connected datastores in a datastore cluster and Storage DRS disables the IO load balancing for the entire datastore cluster. Not only on that single partially connected datastore, but the entire cluster. Effectively degrading a complete feature set of your virtual infrastructure. Therefore having an homogenous configuration throughout the cluster is imperative.

Warning messages

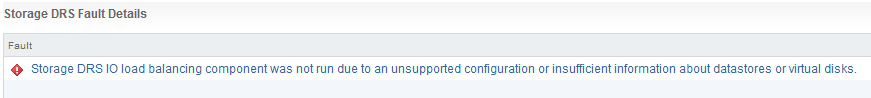

An entry is listed in the Storage DRS Faults window. In the web vSphere client:

1. Go to Storage

2. Select the datastore cluster

3. Select Monitor

4. Storage DRS

5. Faults.

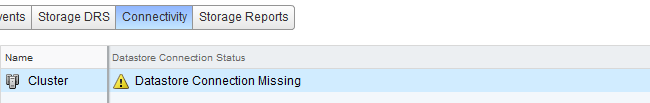

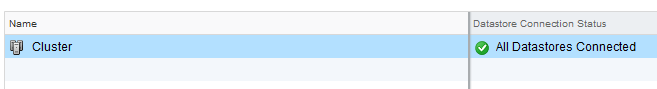

The connectivity menu option shows the Datastore Connection Status, in the case of a partially connected datastore, the message Datastore Connection Missing is listed.

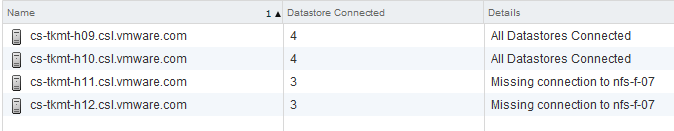

When clicking on the entry, the details are shown in the lower part of the view:

Returning to a fully connected state

To solve the problem, you must connect or mount the datastores to the newly added hosts. In the web client this is considered a host-operation, therefore select the datacenter view and select the hosts menu option.

1. Right-click on a newly added host

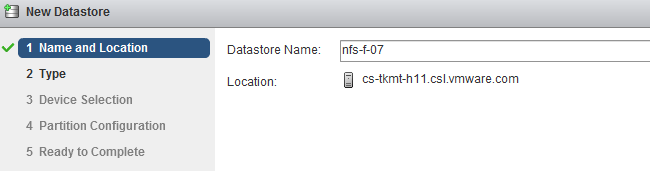

2. Select New Datastore

3. Provide the name of the existing datastore

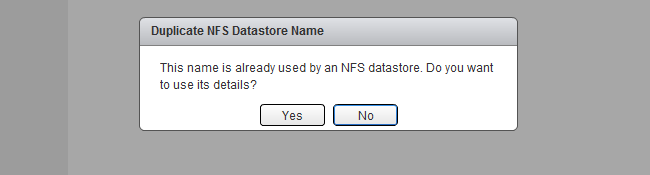

4. Click on Yes when the warning “Duplicate NFS Datastore Name” is displayed.

5. As the UI is using existing information, select next until Finish.

6. Repeat steps for other new hosts.

After connecting all the new hosts to the datastore, check the connectivity view in the monitor menu of the of the datastore cluster

Get notification of these blogs postings and more DRS and Storage DRS information by following me on Twitter: @frankdenneman

Partially connected datastore clusters – where can I find the warnings and how to solve it via the web client?

1 min read

Good info Frank! One quick question. When ESXi is deployed, it uses the disk space it needs to installs and the reminder disk is presented as local datastore to the host. Obviously this is not shared either. Would this also be treated as partially connected, and if so does that mean this would trigger the same effect you described above?

Hi Bilal,

Using non-shared datastores in a datastore cluster is not supported and therefor I would recommend against it to add these to your datastore cluster. Storage DRS does not leverage non-shared vMotion.

Hi Frank,

How can we resolve this from the vSphere client? We have an odd situation where 3 datastores are not connecting to one host in a cluster of 16. It’s correctly zoned and another LUN has been added as a datastore successfully. Obviously the function isn’t available to create new datastores from the vSphere client, so selecting “add storage” brings up no results, or is this a case where disabling the masking of already added LUNs as datastores should be used?

If the datastores are correctly zoned and a rescan datastores doesn’t help I would suggest you put the host in maintenance mode and reboot it. The initialization process during the OS startup is a little bit more thorough and that always used to help me.

Thanks Frank, I’ve just tried that to no avail. I think I’ll have to log an SR.

Just a followup. Support was able to point us in the right direction, as it’s a known issue/not currently supported bug related to VAAI with EMC array’s as per: http://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=2006858