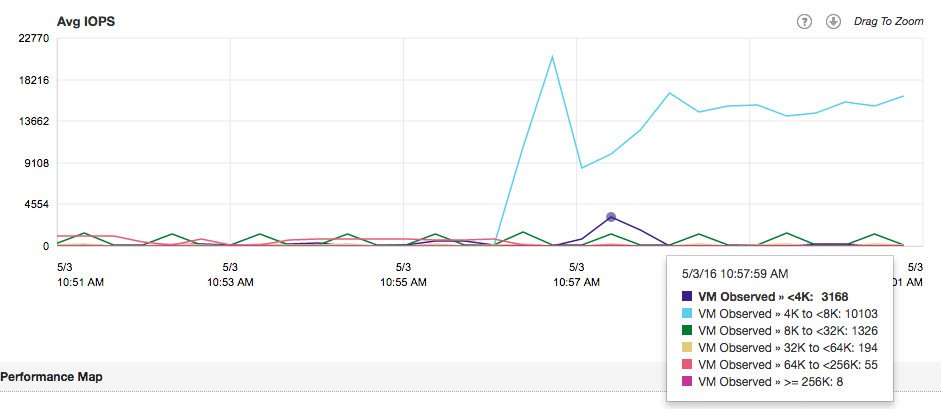

A big part of resource management is sizing of the virtual machines. Right-sizing the virtual machines allows IT teams to optimize the resource utilization of the virtual machines. Right sizing has become a tactical tool for enterprise IT-teams to ensure maximum workload performance and efficient use of the physical infrastructure. Another big part of resource management is keeping track of resource utilization, some of these processes are a part of the daily operation tasks performed by specialized monitoring teams or the administrators themselves. Service Providers usually cannot influence the right sizing element, therefor they focus more on the monitoring part. What is almost universal across virtual infrastructure owners is the incidental nature of tracking down ‘noisy-neighbors’ VMs . Noisy neighbor VMs generate workload in such a way that it monopolizes resources and have negative impact on the performance of other virtual machines. Service Providers and enterprise IT teams have to deal with these consumer outliers in order to meet the SLAs of existing workloads and being able to satisfy the SLA requirements of new workloads.

It’s interesting that noisy neighbor tracking is an incidental activity as it can be so detrimental to the performance of the virtual datacenter. Tools such as vSphere Storage IO Control (short term focus) and vSphere Storage DRS (long term focus) assist to alleviate the infrastructure from the burden of noisy neighbors, but attacking this problem structurally is necessary to ensure consistent and predictable performance from your infrastructure. At long term, noisy neighbor VMs impact the projected consolidation ratio, which in turn influences the growth rate of the infrastructure. I’ve seen plenty of knee jerk reactions, creating a server and storage infrastructure sprawl due to introduction of these outlier workloads.

Identifying noisy neighbors can become a valuable tool in both strategic and tactical playbooks of the IT organization. Having insight of which VMs are monopolizing the resources allow IT teams to act appropriately. Similar to real life the behavior of noisy neighbor can be changed often, but sometimes that’s the nature of the beast and you just have to live with it. In that situation noisy neighbors become outliers of conduct and one ha to make external adjustments. This insight allows IT teams to respond along the entire vertical axis of the virtual datacenter, from application to infrastructure choice. By having the correct analysis, the IT team can provide insights to the application owner, allowing them to adjust accordingly. It helps the IT team to understand whether the environment can handle the workload and make adjustment to the infrastructure necessary. Sometimes the intensity of the workload is just what it is and hosting that workload is necessary to support the business. In that case the IT team has to understand whether the infrastructure is suitable to support the application. As most IT organization have access to multiple platforms, the accurate insight of characteristics (and requirements) of the workload allows them to identify the correct platform.

Virtual Datacenters are difficult to monitor. They are comprised of a disparate stack of components. Every component logs and presents data differently. Different granularity of information, different time frames, and different output formats make it extremely difficult to correlate data. In addition you need to be able to correctly identify the workload characteristics and interpret the impact it has on the shared environment. We do not live in a world anymore where we have to deal with isolated technology stacks. Applications typically do not run anymore on a single box, connected to a single and isolated raid array. Today everything within the infrastructure is shared, the level of hardware resource distribution is diluting with each introduction of new hardware. Where we used to run a single application in a VM on top of server with ten other VMs, sharing a couple of NICs and HBA’s, we slowly moved towards converged network platforms. In the last 10 years, we shared and shared more, the only monolith remaining is the application in the VM and that is rapidly changing as well with the popularity of containers and micro services. Yet most of our testing mechanisms and monitoring efforts are still based on the architecture we left behind 10 years ago. Virtual Datacenters require continuous analytics that fully comprehends the context of the environment, with the ability to zoom in and focus on outliers if necessary.

In the upcoming series I’m going to focus on how to explore cluster level workloads and progressively zooming into specific workloads based on IOPS, block size, throughput and unaligned IOs.

Tracking down noisy neighbors

2 min read