Uncategorized

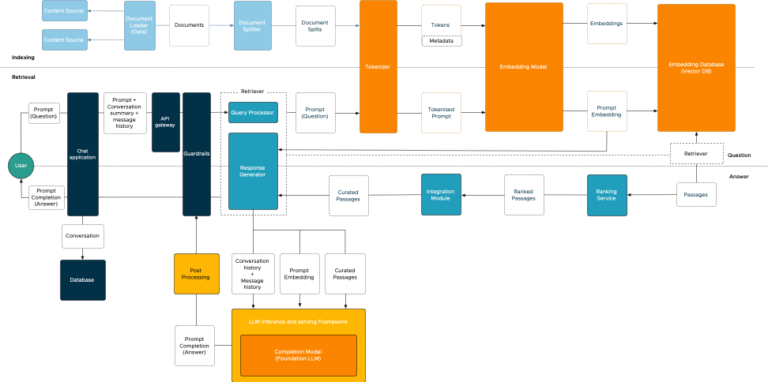

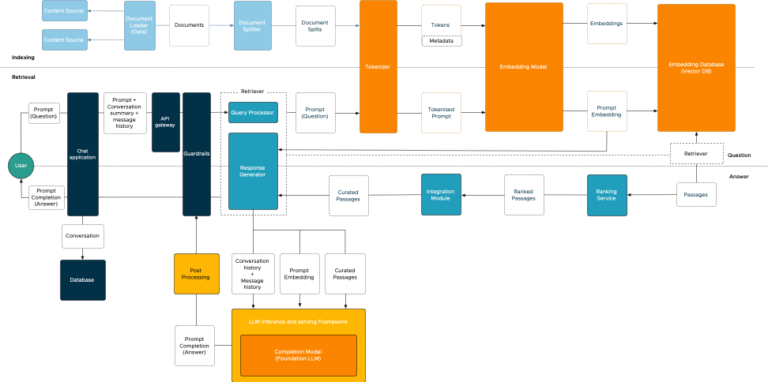

I’m excited to announce the release of my latest white paper, “VMware Private AI Foundation – Privacy and Security Best Practices.”...

I’m looking forward to next week’s VMware Explore conference in Barcelona. It’s going to be a busy week. Hopefully, I will...

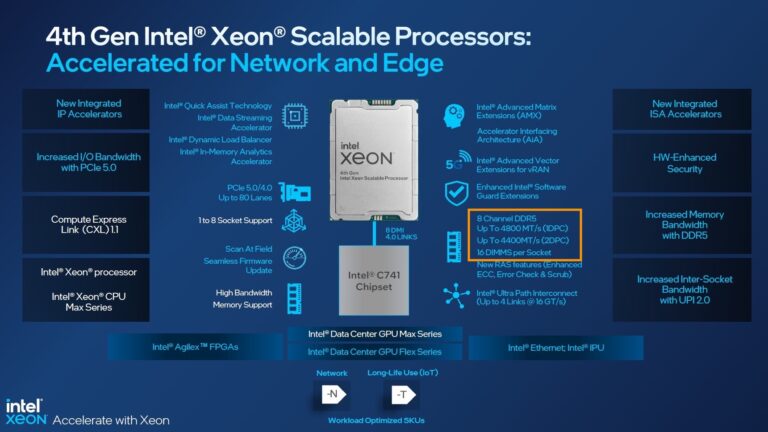

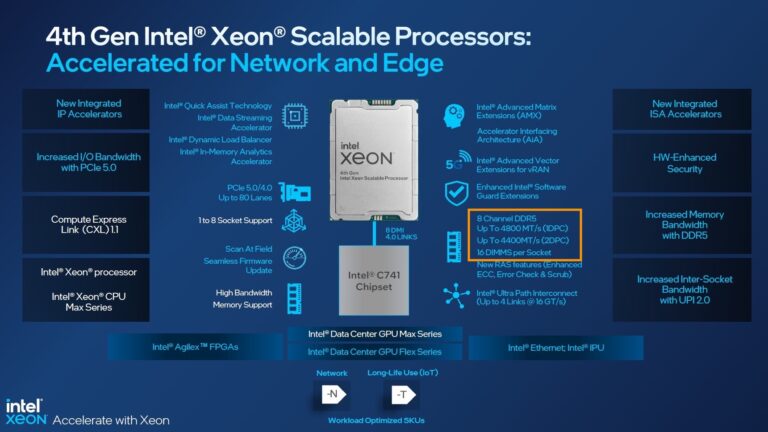

The 4th generation of the Intel Xeon Scalable Processors (codenamed Sapphire Rapids) was released early this year, and I’ve been trying...

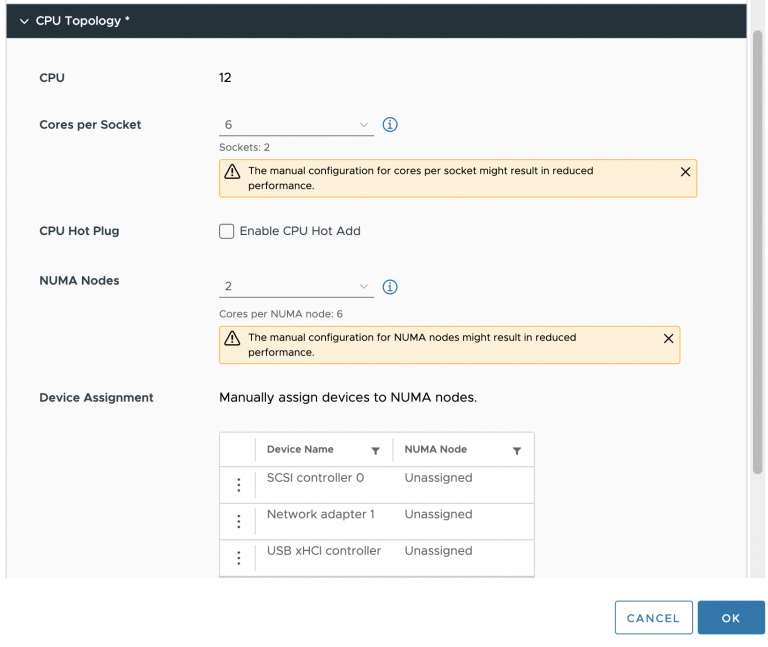

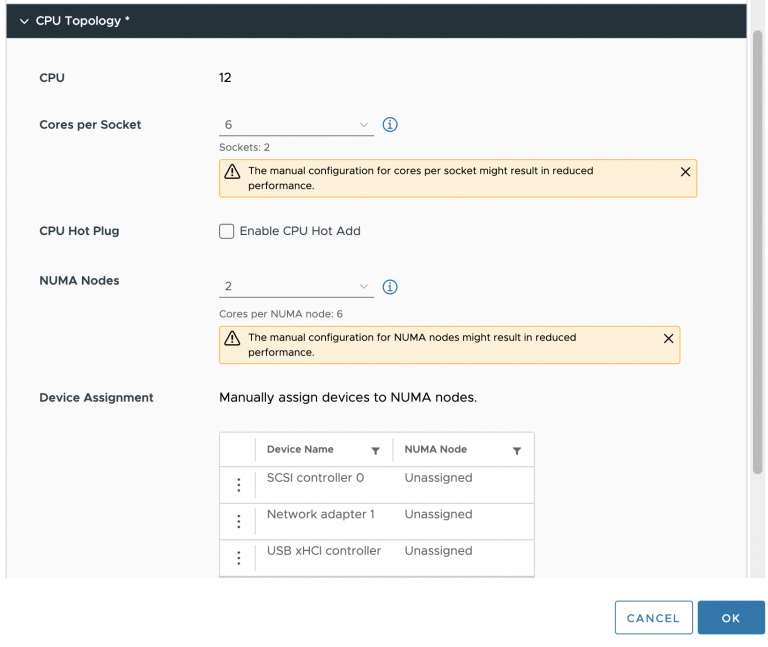

By default, vSphere manages the vCPU configuration and vNUMA topology automatically. vSphere attempts to keep the VM within a NUMA node...

Katarina tweeted a very expressive tweet about her love/hate (mostly hate) relation with CPU pinning, and lately I have been in...

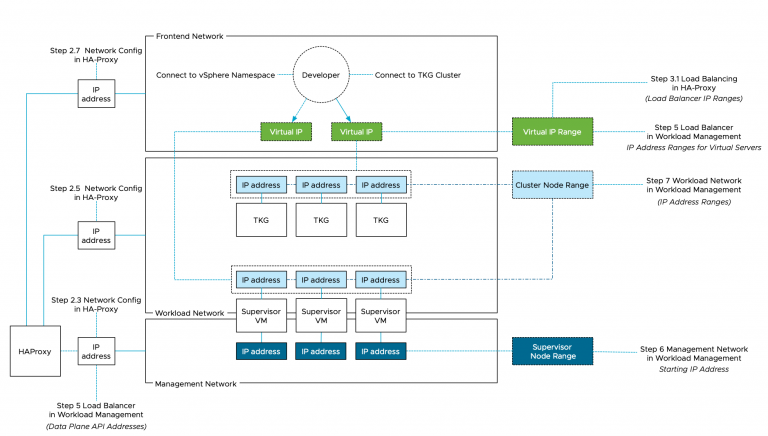

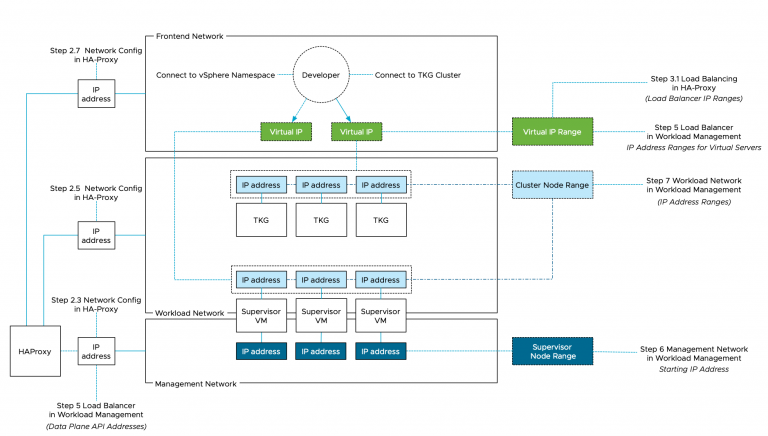

I noticed quite a few network-related queries about the install of vSphere with Tanzu together with vCenter Server Networks (Distributed Switch...

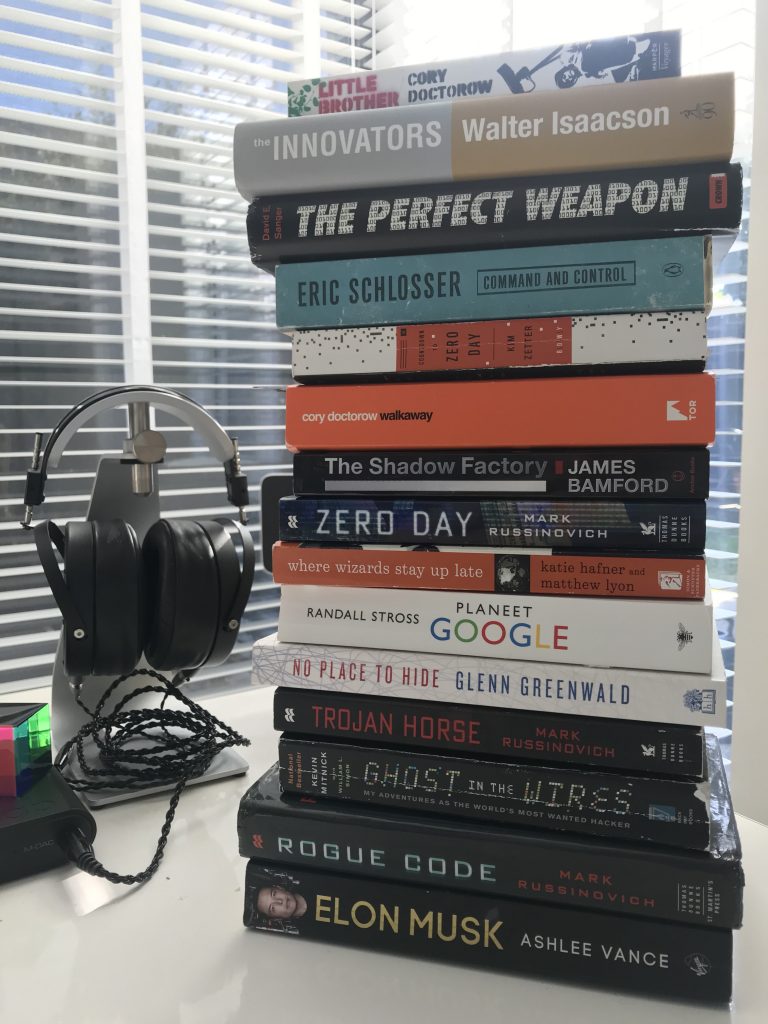

Today I was discussing with Duncan some great books to read. Disconnecting fully from work is difficult for me, so typically,...

vSphere 7 contains the new DRS algorithm that differs tremendously from the old one. The performance team has put the new...

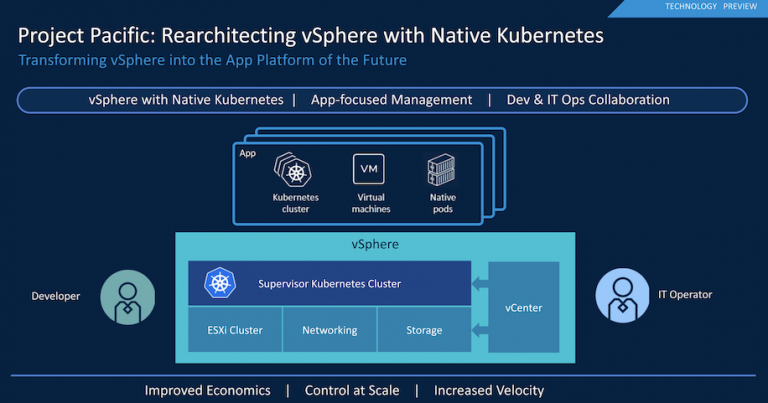

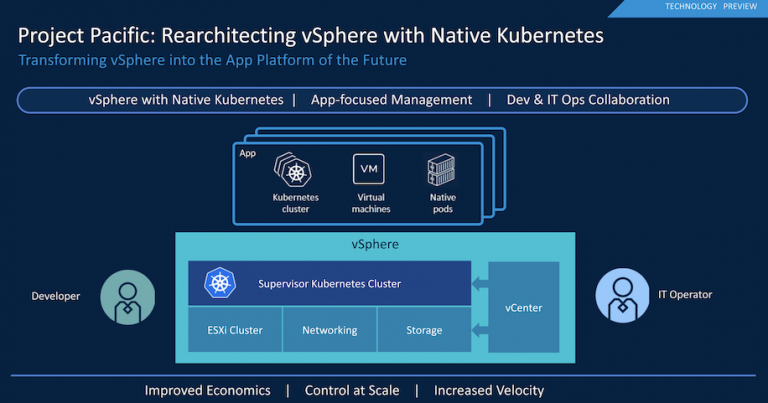

During the keynote of the first day of VMworld 2019, Pat unveiled Project Pacific. In short, project Pacific transforms vSphere into...

The title is a reference to one of the most interesting books I have ever read, Escher, Godel, and Bach. Someone...