Miscellaneous

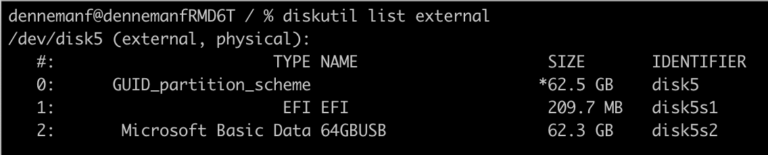

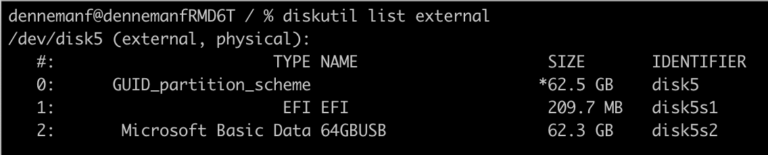

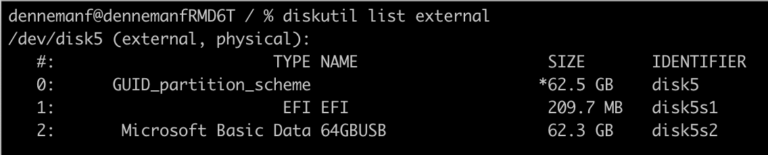

I need to install Windows 11 on a gaming PC, but I only have a MacBook in my house, as this...

During the Belgium VMUG, I talked with Jeffrey Kusters and the VMUG leadership team about the challenges of writing a book....

More than a week ago Niels and I released the VMware vSphere 6.5 Host Resources Deep Dive and the community has...

Last week we released the VMware vSphere 6.5 Host Resources Deep Dive book and Twitter and Facebook exploded. We’ve seen some...

Amy Lewis asked me to appear on the Geek Whisperers Live podcast at VMworld 2016 in Las Vegas. And as always...

Yesterday the top 25 vBlogs were announced and once again I’m in the top 5. I would like to thank all...

For the last 3 months my main focus within PernixData has been (and still is) the PernixCloud program. In short PernixData...

Recently Satyam Vaghani wrote about PernixData cloud. In short PernixData Cloud is the next logical progression of PernixData Architect and provide...

One of my favorite business books is the famous book of Michael Hammer “Reengineering the Corporation”. The theme is the book...

Welcome to part 6 of the Database workload characteristics series. Databases are considered to be one of the biggest I/O consumers...