If your environment is licensed with the enterprise plus license you can choose to use a standard vSwitch or use a distributed switch for your vMotion network. Multi-NIC vMotion network is a complex configuration that consists out of many different components. Each component needs to be configured identically on each host in the cluster. Distributed switches can help you with that and in addition provide you with tools to prioritize traffic and allow other network streams to utilize available bandwidth when no vMotion traffic is active.

1. Use distributed portgroups consistent configuration across the cluster

Consistently configuring two portgroups on each host in the cluster with alternating vmnic failover order is a challenging task. It’s a mere fact that humans are not good in performing a repetitive task consistently. Many virtual infrastructure health checks at various sites confirmed that fact.

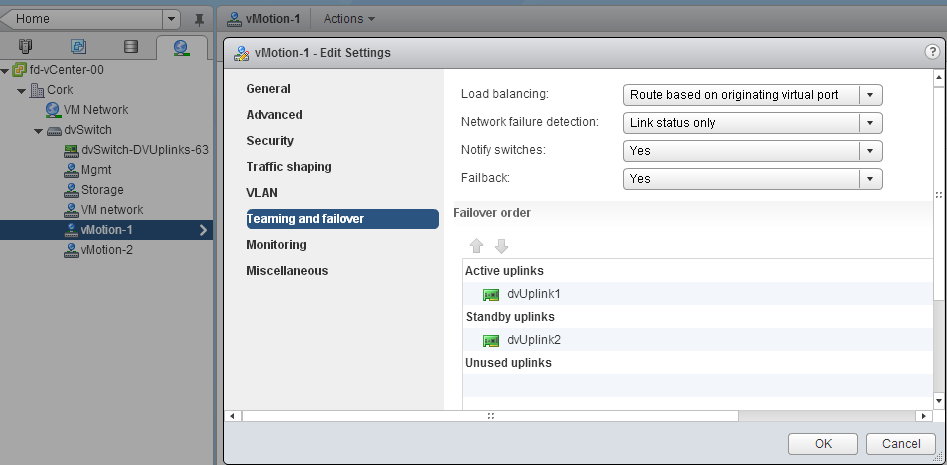

The beauty of distributed switches (VDS) is that it acts as profile configuration. Configure the portgroup once and the distributed switch propagates these settings to all the connected hosts of that distributed switch. A multi-NIC vMotion configuration is a perfect use-case to leverage the advantages of the distributed switch. As mentioned a Multi-NIC vMotion configuration is a complex configuration consisting of two portgroups with their own unique settings.

By using the distributed switch, only two distributed portgroups need to be configured and the VDS distributes the portgroups and their settings to each host connected to the VDS. This saves a lot of work and you are ensured that each host is using the same configuration. Consistency in your cluster is important for to provide you reliable operations and consistent performance.

2. Set traffic priority with Network I/O Control

Network I/O control can help you to consolidate the network connections into a single manageable switch, allowing you to utilize all the bandwidth available while still respecting requirements such as traffic isolation or traffic prioritization. This is applicable to both configurations containing a small number of 10GbE uplink as well for configurations that contain a high number of 1GbE ports.

vMotion has a high bandwidth usage, performing optimally in high bandwidth environments. However vMotion traffic is not always present. Isolating NICs in order to protect other network traffic streams or provide a particular level of bandwidth can be uneconomical and may leave bandwidth idle and unused.

By using Network I/O Control, you can control the priority of network traffic during contention. This allows you to specify the relative importance of traffic streams and provide bandwidth to particular traffic streams when other traffic competes for bandwidth.

3. Using Load Based Teaming to balance all traffic across uplinks

Load based teaming, identified in the user interface as “Route based on physical NIC load” allows for ingress and egress traffic balancing. When consolidating all uplinks in one distributed switch, load based teaming (LBT) distributes the traffic streams across the available uplinks by taking into the utilization into account.

Please note: Use Route based on originating virtual port load balancing policy for the two vMotion portgroup, but configure VM network with load based teaming load balancing policy. Route based on originating virtual port load balance policy creates a vNIC to pNIC relation during boot of a virtual machine. That vNIC is “bound” to that pNIC until the pNIC fails or the virtual machine is shutdown. When using a converged network or allowing all network traffic to use each uplink, a virtual machine could experience link saturation or latency due to vMotion using the same uplink. With LBT the virtual machine vNIC can be dynamically bound to a different pNIC with lesser utilization, providing better network performance. LBT monitors the utilization of each uplink and when the utilization is greater than 75 percent for a sustained period of time, LBT moves traffic to other underutilized uplinks.

The benefits of a Distributed vSwitch

Consistent configuration across hosts saves a lot effort, during configuration and troubleshooting. Consistent configuration is key when providing a stable and a performing environment. Multi-NIC vMotion allows you to use as much bandwidth as possible benefitting DRS in load balance and maintenance mode operations. LBT and Network I/O Control allow other network traffic streams to consume network traffic as much as possible. Load based teaming is a perfect partner to Network I/O Control. LBT attempts to balance out the network utilization across all available uplinks and Network I/O Control dynamically distributes network bandwidth during contention.

Back to standard vSwitch when uplink isolation is necessary?

Is this Multi-NIC vMotion/NetIOC/LBT configuration applicable to every customer? Unfortunately it isn’t. Converging all network uplinks into a single distributed switch and allowing all portgroups to utilize the uplinks require the VLANs to be available on every uplink. Some customers want to isolate vMotion traffic or other traffic and use dedicated links. For that scenario I would still use a distributed switch and create one for the vMotion configuration. In this particular scenario you do not leverage LBT and Network I/O Control but still benefit from the consistent configuration of distributed portgroups.

Part 1 – Designing your vMotion network

Part 2 – Multi-NIC vMotion failover order configuration

Part 3 – Multi-NIC vMotion and NetIOC

Part 4 – Choose link aggregation over Multi-NIC vMotion?

Designing your vMotion network – 3 reasons why I use a distributed switch for vMotion networks

3 min read

Hi Frank … Great post as ever ..

I’ve been wondering about Multi-NIC vMotion and vDS for a while, but I also had a basic NIC design question hanging on my head.

In the past .. based upon your lovely book with Duncan .. we had the Management/vMotion setup as :

vSwitch0 , 2 NICs , Active – Active

PG Management , Active vmk0 – Standby vmk1 , no failback

PG vMotion , Active vmk1 – Standby vmk0

Now we are talking about multi NIC vMotion, how does that change ?

Do we need to have 4 NICs, and just have 2 dedicated vSwitch/VDS ? Or can we do it with 3 .. or something else

Where I agree vDS is geeat play from 5.1 going forward. There are stil many large corporations running 4.0, I would recommend staying away from vDS in these cases becuse of upgrade concerns.

@dcd270