Over the last year, we’ve interviewed many guests, and throughout the Unexplored Territory Podcast show, we wanted to provide a mini overview series of the VMware Cloud Services. Today we released the latest episode featuring Jeremiah Megie discussing the Azure VMware Solution.

Azure VMware Solution

VMware Cloud on AWS

In episode 013, we talk to Adrian Roberts, Head of EMEA Solution Architecture for VMware Cloud on AWS at AWS. Adrian discusses the various reasons customers are looking to utilize VMware Cloud on AWS, some of the challenges, and the opportunities that arise when you have your VMware workloads close to native AWS services.

Google Cloud VMware Engine

In episode 016, we talk to Dr. Wade Holmes, Security Solutions Global Lead at Google. Wade introduces Google Cloud VMware Engine, discusses various use cases with us, and highlights some operational differences between on-prem only and multi-cloud.

Oracle Cloud VMware Solution

In episode 023, we talk to Richard Garsthagen, Oracle’s Director of Cloud Business Development. Our discussion was all about Oracle Cloud VMware Solution. What is unique about Oracle Cloud VMware Solution compared to other solutions? Why does Richard believe this is a platform everyone should consider when you are exploring public cloud offerings?

Cloud Flex Storage

In episode 037, we talk to Kristopher Groh, Direct Product Management at VMware, responsible for various storage projects. Kris introduces us to Cloud Flex Storage and discusses the implementation in-depth. Kris also explains the different use cases for Cloud Flex Storage versus vSAN within VMware Cloud on AWS.

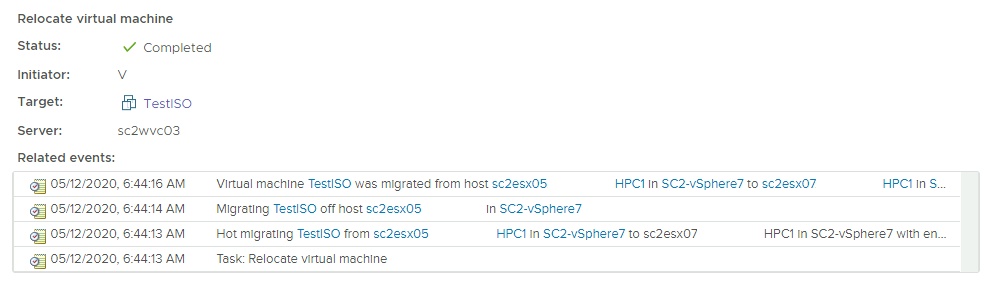

Cloud Migration

In episode 039, we have a conversation with Niels Hagoort, Technical Marketing Architect at VMware. Niels guides us through the concept of Cloud Migration and dives into the solutions that VMware offers to make the migration as smooth as possible.

Listen on Spotify or Apple.

Follow us on Twitter for updates and news about upcoming episodes: https://twitter.com/UnexploredPod.