A couple of weeks ago I was fortunate enough to attend a tech preview of PernixData Flash Virtualization Platform (FVP). Today PernixData exited stealth mode so we can finally talk about FVP. Duncan already posted a lengthy article about PernixData and FVP and I recommend you to read it.

At this moment a lot of companies are focusing on flash based solutions. PernixData distinguishes itself in today’s flash focused world by providing a new flash based technology but that is not a storage array based solution or a server-bound service. I’ll expand on what FVP does in a bit, let’s take a look at the aforementioned solutions. The solutions have drawbacks. A Storage array based flash solution is plagued by common physics. Distance between the workload and the fast medium (flash) generates a higher latency than when the flash disk is placed near the workload. Placing the flash inside a server provides the best performance but it must be shared between the hosts in the cluster to become a true enterprise solution. If the solution breaks important functions such as DRS and vMotion than the use case of this technology remains limited.

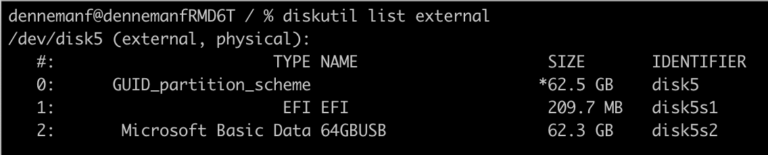

FVP solves these problems by providing a flash based data tier that becomes a cluster-based resource. FVP virtualizes server side flash devices such as SSD drives or PCIe flash devices (or both) and pools these resources into a data tier that is accessible to all the hosts in the cluster. One feature that stands out is remote access. By allowing access to remote devices, FVP allows the cluster to migrate virtual machines around while still offering performance acceleration. Therefor cluster features such as HA, DRS and Storage DRS are fully supported when using FVP.

Unlike other server based flash solutions, FVP accelerates both read and write operations. Turning the flash pool in to a “data-in-motion-tier”. All hot data exists in this tier, thus turning the compute layer into an all-IOPS-providing platform. Data that is at rest is moved to the storage array level, turning this layer into the capacity platform. By keeping the I/O operations as close to the source (virtual machines) as possible, performance is increased while reducing the traffic load to the storage platform as well. By filtering out read I/Os the traffic pattern to the array is changed as well, allow the array to focus more on the writes

Another great option is the ability to configure multiple protection levels when using write-back. Data is synchronously replicated to remote devices. During the tech preview Satyam and Poojan provided some insights on the available protection levels, however I’m not sure if I’m allowed to share these publically. For more information about FVP visit Pernixdata.com

The beauty of FVP is that its not a virtual appliance and that it does not require any agents installed in the guest OS. FVP is embedded inside the hypervisor. Now this for me is the key to believe that this ”data-in-motion-tier” is only the beginning of PernixData. By having insights in the hypervisor and understanding the dataflow of the virtual machines, FVP can become a true platform that accelerates all types of IOPS. I do not see any reasons why FVP is not able to replicate/encrypt/duplicate any type of input and output of a virtual machine. 🙂

As you can see I’m quite excited by this technology. I believe FVP is as revolutionary/disruptive as vMotion. It might not be as “flashy” (forgive the pun) as vMotion but it sure is exciting to know that the limitation of use-cases is actually the limitation of your imagination. I truly believe this technology will revolutionize virtual infrastructure ecosystem design.

PernixData Flash Virtualization Platform will revolutionize virtual infrastructure ecosystem design

2 min read

Nice post and analysis. Great technology and very disruptive!

Nice introductory write up Frank. I would agree that FVP is an exciting leap forward. Looking forward to getting involved with the beta.

Mark

From your description, it looks quite similar to the tech preview of Software Defined Storage aka vSAN aka vSphere Distributed Storage. Is this the beginning of a flood of 3rd party hypvervisor embedded solutions? Very interesting to watch…

Will Vmware acquire Pernix Data to complete its vision of SDDC

This seems to be something that VMware ought to be doing and maybe even achieve better results through tighter kernel integration?? I agree that this seems like a perfect acquisition target for VMware.

This write up is excellent and I can see this company creating a true niche in the use of a flash pool. What a revolution. Let’s hope for some big success as I admire Satyam Vaghani and Co for making true innovations in the virtualised area.