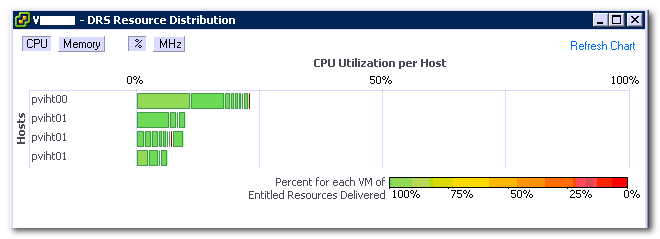

Often I receive the question why a virtual machine is not receiving resources while the ESXi host is lightly utilized and is accumulating idle time. This behavior is observed while reviewing the DRS distribution chart or the Host summary tab in the vSphere Client.

A common misconception is that low utilization (Low MHz) equals schedule opportunities. Before focusing on the complexities of scheduling and workload behavior, let’s begin by reviewing the CPU distribution chart.

The chart displays the sum of all the active virtual machines and their utilization per host. This means that in order to have 100% CPU utilization of the host, every active vCPU on the host needs to consume 100% of their assigned physical CPU (pCPU). For example, an ESXi host equipped with two Quad core CPUs need to simultaneously run eight vCPUs and each vCPU must consume 100% of “their” physical CPU. Generally this is a very rare condition and is only seen during boot storms or incorrect configured scheduled anti-virus scanning.

But what causes latency (ready time) during low host utilization? Lets take a closer look at some common factors that affect or prohibit the delivery of the entitled resources:

- Amount of physical CPUs available in the system

- Amount of active virtual CPUs

- VSMP

- CPU scheduler behavior and vCPU utilization

- Load correlation and load synchronicity

- CPU scheduler behavior

Amount of physical CPUs: To schedule a virtual CPU (vCPU) a physical (pCPU) needs to be available. It is possible that the CPU scheduler needs to queue virtual machines behind other virtual machines if more vCPUs are active than available pCPUs.

Amount of active virtual CPUs: The keyword is active, an ESXi host only needs to schedule if a virtual machine is actively requesting CPU resources, contrary to memory where memory pages can exist without being used. Many virtual machines can run on host without actively requesting CPU time. Queuing will be caused if the amount of active vCPUs exceeds the number of physical CPUs.

vSMP: (Related to the previous bullit) Virtual machines can contain multiple virtual processors. In the past vSMP virtual machine could experience latency due to the requirement of co-scheduling. Co-scheduling is the process of scheduling a set of processes on different physical CPUs at the same time. In vSphere 4.1 an advanced co-scheduling (relaxed co-scheduling) was introduced which reduced the latency radically. However ESX still needs to co-schedule vCPUs occasionally. This is due to the internal working of the Guest OS. The guest OS expects the CPUs it manages to run at the same pace. In a virtualized environment, a vCPU is an entity that can be scheduled and unscheduled independently from its sibling vCPUs belonging to the same virtual machine. And it might happen that the vCPUs do not make the same progress. If the difference in progress of the VM sibling vCPUs is too large, it can cause problems in the Guest OS. To avoid this, the CPU scheduler will occasionally schedule all sibling vCPUs. This behavior usually occurs if a virtual machine is oversized and does not host multithreaded applications. The “impact of oversized virtual machine series” offer more info on right-sizing virtual machines.

CPU scheduler behavior and vCPU utilization: The local-host CPU scheduler uses a default time slice (quantum) of 50 milliseconds. A quantum is the amount of time a virtual CPU is allowed to run on a physical CPU before a vCPU of the same priority gets scheduled. When a vCPU is scheduled, that particular pCPU is not useable for other vCPUs and can introduce queuing.

A small remark is necessary, a vCPU isn’t necessarily scheduled for the full 50 milliseconds, it can block before using up its quantum, and reducing the effective time slice the vCPU is occupying the physical CPU.

Load correlation and load synchronicity: Load correlation defines the relationship between loads running in different machines. If an event initiates multiple loads, for example, a search query on front-end webserver resulting in commands in the supporting stack and backend. Load synchronicity is often caused by load correlation but can also exist due to user activity. It’s very common to see spikes in workload at specific hours, for example think about log-on activity in the morning. And for every action, there is an equal and opposite re-action, quite often load correlation and load synchronicity will introduce periods of collective non-or low utilization, which reduce the displayed CPU utilization.

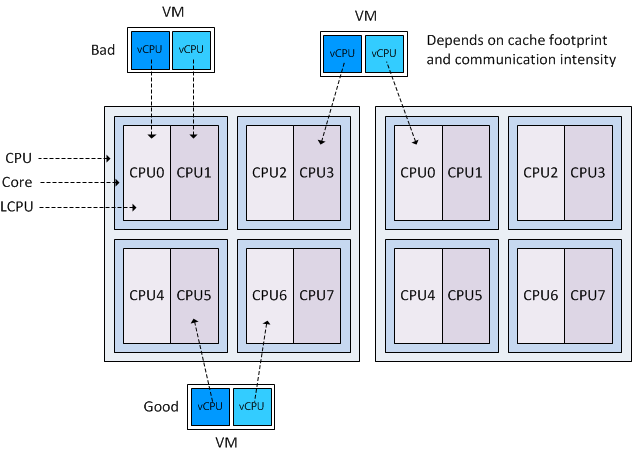

Local-host CPU scheduler behavior: The behavior of the CPU scheduler can impact the on scheduling of the virtual CPU. The CPU scheduler prefers to schedule the vCPU on the same pCPU it was scheduled before, to improve the chance of cache-hits. It might choose to ignore an idle CPU and wait a little bit so it can schedule the vCPU on the same pCPU again. If ESXi operates on a “Non-Uniform Memory Access” (NUMA) architecture, the NUMA CPU scheduler is active and will have effect on certain schedule decisions. The local host CPU scheduler will adjust progress and fairness calculations when Intel Hyper Threading is enabled on the system.

Understanding CPU scheduling behavior can help you avoid latency, although understanding workload behavior and right sizing your virtual machines can help to improve performance. Frankdenneman.nl hosts multiple articles about the CPU scheduler and can be found here, however the technical paper “vSphere 4.1 CPU scheduler” is a must read if you want to learn more about the CPU scheduler.