A lot of interesting material is written about configuring Quality of Service (QoS) on 10GB (converged) networks in Virtual Infrastructures. With the release of vSphere 4.1, VMware introduced a network QoS mechanism called Network I/O Control (NetIOC). The two most popular Blade systems; HP with Flex10 technology and Cisco UCS both offer traffic shaping mechanisms at hardware level.

Both NetIOC and Cisco UCS approach network Quality of Service with a sharing perspective, guaranteeing a minimum amount of bandwidth opposed to the HP Flex-10 technology, which isolates the available bandwidth and dedicate an X amount of bandwidth to a specified NIC.

When allocating bandwidths to the various network traffic streams most admins try to stay on the safe side and over-allocate bandwidth to virtual machine traffic. Obviously it is essential to guarantee enough bandwidth to virtual machines but bandwidth is finite, resulting in less bandwidth available to other types of traffic such as vMotion. Unfortunately by reducing the available bandwidth used for vMotion traffic can ultimately have negative effect on the performance of the virtual machines.

MaxMovesPerHost

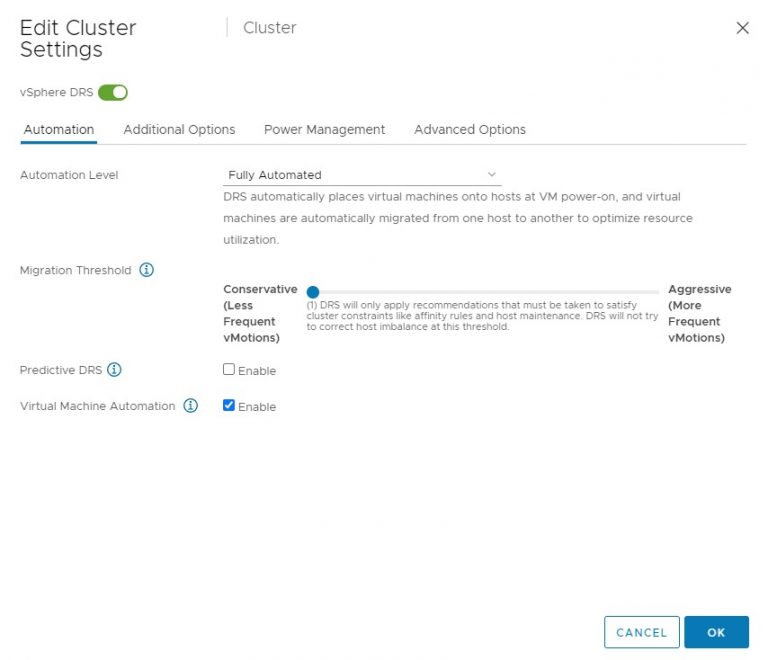

In vSphere 4.1 DRS uses an adaptive technique called MaxMovesPerHost. This technique allows DRS to decide the optimum concurrent vMotions per ESX host for Load-Balancing operations. DRS will adapt the maximum concurrent vMotions per host (8) based upon the average migration time observed from previous migrations. Decreasing bandwidth available for vMotion traffic can result in a lower number of allowed concurrent vMotions.In turn the amount of allowed concurrent vMotions affects the number of migration recommendations generated by DRS. DRS will only calculate and generate the amount of migration recommendation is believes it can complete before the next DRS invocation. It limits the amount of generated migration recommendations, as there is no advantage in generating recommending migrations that cannot be complement before the next DRS invocation. During the next re-evaluation cycle, virtual machine resource demand can have changed rendering the previous recommendations obsolete

By limiting the amount of bandwidth available to vMotion, it can decrease the maximum amount of concurrent vMotions per host and could risk leaving the cluster imbalanced for a longer period of time.

Both NetIOC and Cisco UCS Class of Service (COS) Quality of Service can be used to guarantee a minimum amount of bandwidth available to vMotion during contention. Both techniques allow vMotion traffic to use all the available bandwidth if no contention occurs. HP uses a different approach, isolating and dedicating a specific amount of bandwidth to an adapter and thereby possible restricting specific workloads.

Bred Hedlund wrote an article explaining the fundamental differences in how bandwidth is handled between HP Flex-10 and Cisco UCS.

Cisco UCS intelligent QoS vs. HP Virtual Connect rate limiting

Recommendations for Flex-10

Due to the restrictive behavior of Flex-10, it is recommended to specifically take the adaptive nature of DRS into account and not restricting vMotion traffic too much when shaping network bandwidth for the configured FlexNics. It is recommended to monitor the bandwidth requirements of the virtual machines and adjust the rate limit for virtual machine traffic and vMotion traffic accordingly, reducing the possibility of delaying DRS to reach a steady state when a significant load imbalance in the cluster exits.

Recommendations for NetIOC and UCS QoS

Fortunately the sharing nature of NetIOC and UCS allow other network streams allocate bandwidth during periods without bandwidth contention. Despite this “plays well with other” nature, it is recommended to assign a minimum guarantee amount of bandwidth for vMotion traffic (NetIOC) or a custom Class of Service to the vMotion vNICs (UCS). Chances are that if virtual machines saturate the network, virtual machines are experiencing a high workload and DRS will try to provide the resources the virtual machines are entitled to.

The impact of QoS network traffic on VM performance

2 min read

Do you know of anyone using NetIOC in combination with Traffic Shaping on Flex-10 and just using a single large channel on the Flex-10 side so that vmotion traffic and vm traffic can be on the same Flex-10 channel?