In part 1 of the series of post on the impact of oversized virtual machines NUMA architecture, memory overhead reservation and share levels are reviewed, part 2 zooms in of the impact of memory overhead reservation and share levels on HA and DRS.

Impact of memory overhead reservation on HA Slot size

The VMware High Availability admission control policy “Host failures cluster tolerates” calculates a slot size to determine the maximum amount of virtual machines active in the cluster without violating failover capacity. This admission control policy determines the HA cluster slot size by calculating the largest CPU reservation, largest memory reservation plus it’s memory overhead reservation. If the virtual machine with the largest reservation (which could be an appropriate sized reservation) is oversized, its memory overhead reservation still can substantial impact the slot size.

The HA admission control policy “Percentage of Cluster Resources Reserved” calculate the memory component of its mechanism by summing the reservation plus the memory overhead of each virtual machine. Therefore allowing the memory overhead reservation to even have a bigger impact on admission control than the calculation done by the “Host Failures cluster tolerates” policy.

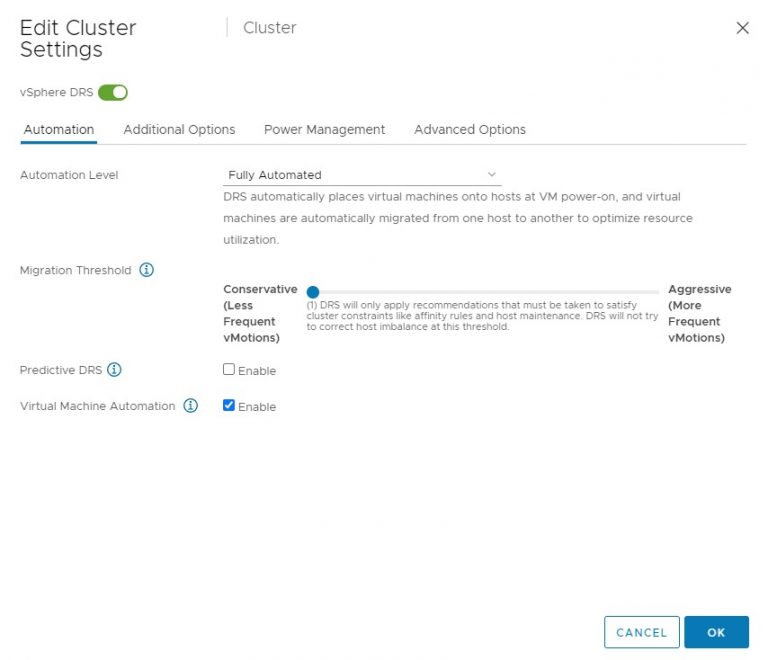

DRS initial placement

DRS will use a worst-case scenario during initial placement. Because DRS cannot determine resource demand of the virtual machine that is not running, DRS assumes that both the memory demand and CPU demand is equal to its configured size. By oversizing virtual machines it will decrease the options in finding a suitable host for the virtual machine. If DRS cannot guarantee the full 100% of the resources provisioned for this virtual machine can be used it will vMotion virtual machines away so that it can power on this single virtual machine. In case there are not enough resources available DRS will not allow the virtual machine to be powered on.

Shares and resource pools

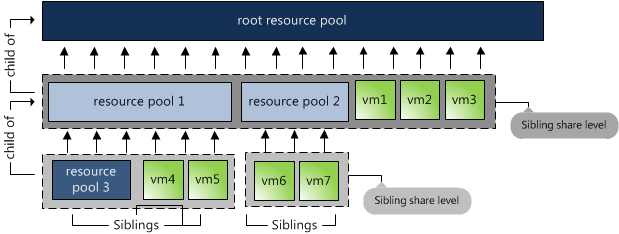

When placing a virtual machine inside a resource pool, its shares will be relative to the other virtual machines (and resource pools) inside the pool. Shares are relative to all the other components sharing the same parent; easier way to put it is to call it sibling share level. Therefore the numeric share values are not directly comparable across pools because they are children of different parents.

By default a resource pool is configured with the same share amount equal to a 4 vCPU, 16GB virtual machine. As mentioned in part 1, shares are relative to the configured size of the virtual machine. Implicitly stating that size equals priority.

Now lets take a look again at the image above. The 3 virtual machines are reparented to the cluster root, next to resource pools 1 and 2. Suppose they are all 4 vCPU 16GB machines, their share values are interpreted in the context of the root pool and they will receive the same priority as resource pool 1 and resource pool2. This is not only wrong, but also dangerous in a denial-of-service sense — a virtual machine running on the same level as resource pools can suddenly find itself entitled to nearly all cluster resources.

Because of default share distribution process we always recommend to avoid placing virtual machines on the same level of resource pools. Unfortunately it might happen that a virtual machine is reparented to cluster root level when manually migrating a virtual machine using the GUI. The current workflow defaults to cluster root level instead of using its current resource pool. Because of this it’s recommended to increase the number of shares of the resource pool to reflect its priority level. More info about shares on resource pools can be found in Duncan’s post on yellow-bricks.com.

Go to Part 3: Impact of oversized virtual machine.

Impact of oversized virtual machines part 2

2 min read

Frank – great series.. I have fought the battle of oversized virtual machines many times so it’s great to have more information to use to argue against them.

The one impact of oversized virtual machines that I find people always forget about is VMKernel swap files. Many people don’t consider the additional storage requirements of the swap file when designing the environment and end up getting surprised later. It becomes especially difficult when the oversized VM becomes the template and now every VM is oversized.

Looking forward to part 3…

Matt

Hi Matt,

Thanks! Swap files are discusses in part 3. 🙂

Good stuff Frank.

We are running into this challenge mainly for political reason.

One thing that I came across is that over sized VMs also create a potential and critical “worst case scenario”

If the normal memory load on a host is 70% but in fact the host memory is over allocated at 120%. Something like a memory leak in a process inside a vm or like a backup agent in which the default settings is to use all available memory can bring a host and the performance to the ground.

Nothing like a saturday morning, coffee and new post from you (…and others ;-))

Cheers

Greg